AI Agent Memory helps technology move beyond simple one-time responses. Modern AI systems face a big challenge: they lack memory. Most AI systems are powerful but suffer from digital amnesia due to poor memory management.

They forget past interactions once a session ends. The shift from basic AI tools to smart, self-improving AI agents depends on strong memory systems. This change matters for agents that handle complex goals over time, learn from results, and adapt to users.

The market shows this need clearly. The global AI agents market was worth about USD 5.40 billion in 2024. It will grow to include more sophisticated memory management techniques. USD 51.87 billion by 2030.

That’s a growth rate of 45.8% each year according to Virtue Market Research, 2026. This growth depends on memory-enabled agent systems.

Key Points You Need to Know

AI agents need memory to move beyond one-time responses and overcome digital amnesia. Without memory, agents lose context, waste effort repeating tasks, and cannot personalize experiences.

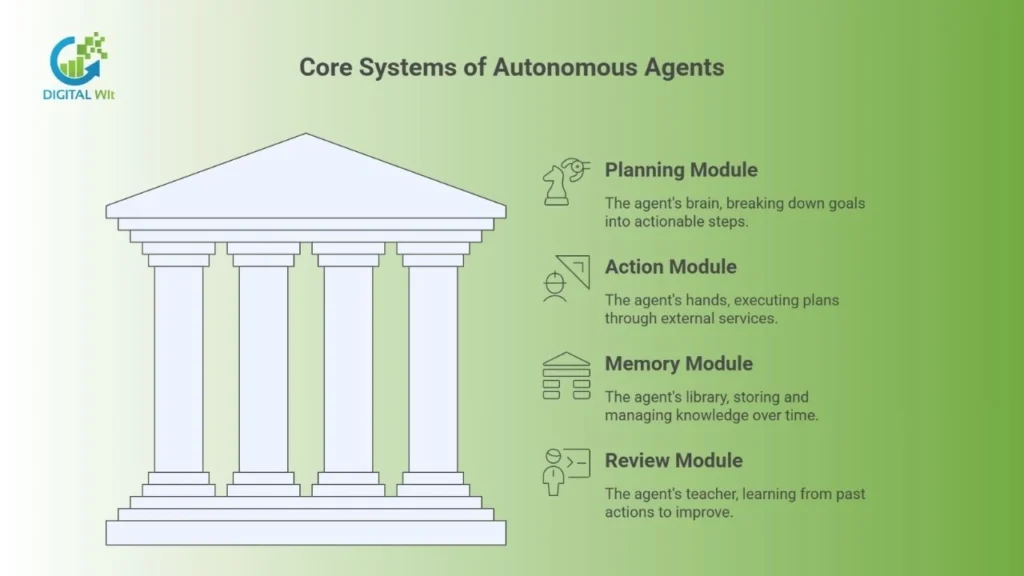

Modern agents have four core systems. These are the Planning Module, Action Module, Memory Module, and Review Module. Context windows are temporary session storage, while real memory persists in external databases.

Agent memory is read-write unlike read-only RAG systems. This enables learning and adaptation.

Why AI Agents Need Memory

AI systems have a basic flaw: no lasting memory. An agent needs to learn from past interactions.

It must pursue long-term goals on its own. Without memory, every decision starts from zero. This causes three main problems.

Lost Context means the system can’t remember conversations beyond a short window. Wasted Effort means it repeats tasks, asks the same questions, and recalculates old results. No Personalization means it treats returning users as strangers every time.

AI Agent Memory lets the agent store, recall, and use past experiences over time. This gives the agent continuity. It improves reasoning, makes better decisions, and stays consistent in complex tasks.

How Autonomous Agents Work

A smart AI agent is a system built for independent action. The brain (a powerful AI model) is important.

But the framework gives structure for memory, action, and learning. Modern autonomous agents have several core systems working together.

Key Parts of an AI Agent

The Planning Module is the agent’s brain. It takes a big goal and breaks it down into steps. It uses techniques like ReAct (Reasoning and Acting) to think through each step and pick the best action.

The Action Module gives the agent “hands” to work with the real world. It uses external services, runs code, accesses APIs, or searches the web. It turns plans into real actions through the use of LLMs.

The Memory Module stores the agent’s knowledge over time. It manages how information is saved, found, and used. This is what turns a basic tool into a smart, adaptive agent.

The Review Module checks how well actions worked. It creates lessons that go back into memory. This drives the agent’s growth.

What Agent Memory Really Means

Agent memory is different from simple context management. Understanding this difference is key to building smart agents.

Context Window vs. Memory

The Context Window is temporary storage during a session. It’s like short-term memory. It holds recent history for immediate use.

But it has limits and disappears when the task ends. Real long-term memory lasts forever. It lives in special external databases.

RAG Is a Tool, Not Memory

RAG (Retrieval-Augmented Generation) helps find information. Old RAG systems are “read-only,” limiting their ability to function as memory in AI agents.

They add static documents to the agent’s input. Agent Memory is read-write. It uses RAG to find information through a query process.

But it also writes, changes, and deletes new knowledge (Leonie Monigatti, 2026). This ability to write new memories gives the agent the power to learn and adapt.

Short-Term Memory: The Scratchpad

The scratchpad is the agent’s workspace for current tasks. It keeps things running smoothly during active work.

How It Works

Short-term memory holds active context for the current session. It stores the steps of the current plan. This keeps the agent on track during complex work.

It typically uses a fixed-size buffer. The limit of memory in AI agents depends on the session staying active. When the session ends, the memory vanishes.

You get coherence but not persistence.

Long-Term Memory: The Library

Long-Term Memory is the base for a truly independent agent. It stores knowledge across sessions for continuous learning.

How It Works – Vectors and Search

Vector Embeddings work like this. When something needs to be remembered, it becomes a vector embedding. A special model creates this.

It’s a numerical form of the information’s meaning. Vector Database Storage enhances the capabilities of working memory in LLMs. These vectors go into a special database (like Pinecone, Weaviate, or Qdrant).

Semantic Search happens when the agent needs context. It converts the current question into a vector. It searches for similar stored memories.

These get passed back to the agent for its next step.

Why Long-Term Memory Matters

Lasting memory creates business value. Goal Persistence means the agent remembers ongoing projects across many interactions.

Better Personalization means it remembers user preferences, communication style, and past issues for tailored service. Learning from Experience means it stores records of what worked and what failed, improving over time.

Three Types of Long-Term Memory

Agent memory splits into three types. Each builds a complete knowledge base. What it enables in terms of memory in AI agents is transformative.

| Memory Type | What It Stores | What It Does | Example |

|---|---|---|---|

| Episodic Memory | Specific events with timestamps | Stores the “what,” “where,” and “when” | Self-reflection, debugging, and traceability |

| Semantic Memory | General facts and concepts | Stores the “what” and “why” of short-term and long-term memory | Understanding context and building knowledge is essential for LLMs |

| Procedural Memory | Successful action sequences | Stores the “how-to” knowledge | Efficient automation and skill reuse |

How Agents Learn and Improve

True intelligence comes from continuous feedback using memory. Agents get smarter through a cycle of actions and reflections.

The Learning Cycle

The agent grows smarter through Plan → Act → Observe → Reflect → Update. Memory plays a role in every step.

Plan means the agent finds existing knowledge to guide the plan. Act means the agent executes the plan. Observe means the agent records what happened.

Reflect means the review system checks if the plan worked. Update means the agent writes the lesson back to memory. This process is efficient.

These models achieve a 58.8% memory reuse rate compared to 0% for old static systems (Cognitive Workspace, ).

Managing Memory

Memory needs active management to stay efficient. Without good management, the system becomes slow and cluttered.

Smart Forgetting means the system removes or combines old, low-value memories. This prevents bloat and keeps searches fast. Memory Consolidation means it regularly converts detailed memories into general facts.

This creates a cleaner knowledge base.

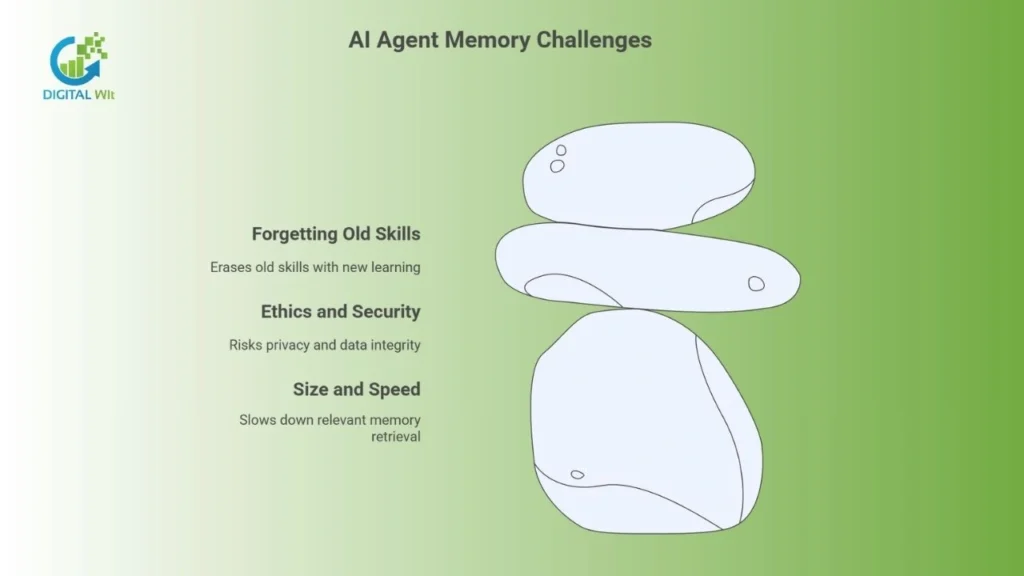

Key Challenges

Lasting memory in AI agents creates real problems that need solutions. These challenges affect speed, ethics, and learning.

Size and Speed Issues

The main problem is getting relevant memory back into the agent’s limited workspace. Challenges include several technical issues.

Too Much Information means finding too many irrelevant bits along with what’s needed. Slow Retrieval means as memory grows to millions of entries, search speed slows down.

Ethics and Security Risks

Storing huge amounts of personal data creates risks. Privacy in memory management is crucial for AI systems.

Rules require strong encryption and user control (like the right to delete data). Bias Growth means if memories come from biased data, those biases grow and spread to future decisions made by agentic LLM agents. False Memories mean an agent might store wrong information as correct, poisoning future logic.

Forgetting Old Skills

In learning systems, gaining new skills can erase old ones. This is called Catastrophic Forgetting.

Research aims to balance learning new things with keeping old knowledge through better memory techniques.

Real-World Use and Tech Stack

Memory-enabled agents are changing key industries. They’re moving from tests to live systems.

Real Applications

Customer Service shows how agents remember full customer history and past solutions for personalized support. This drives North American market growth Grand View Research.

Developer Tools show how agents use memory management to keep debugging knowledge and code patterns. They can fix bugs and manage pipelines in minutes, Top AI Agent Models SO-Development. Healthcare shows how agents store patient histories, treatment results, and medication details.

This leads to personalized clinical support. Healthcare will see some of the highest growth rates.

Required Technology

Building agent memory needs specialized tools. Each component plays a specific role in the system.

| Component | Key Tools | What It Does |

|---|---|---|

| Frameworks | LangChain, LlamaIndex, AutoGen, CrewAI | Run the plan-act-observe loop and provide memory interfaces |

| Vector Databases | Pinecone, Weaviate, Qdrant | Store numerical forms of memories at scale |

| Memory Tools | Redis, Knowledge Graphs (Neo4j) | Enable fast temporary memory and structured relationships |

| Embedding Models | OpenAI, Cohere | Create rich vectors for accurate retrieval |

What’s Next for AI Agent Memory

Future advances focus on creating human-like memory systems. Research is pushing toward more natural and flexible designs.

New Designs

Layered Memory means moving beyond just short and long-term to include mid-term memory for recent summaries. This mimics how the human brain works.

Multimodal Memory means letting agents store and find memories that include text, images, video, and audio together. Graph-Based Memory means using Knowledge Graphs to store memories. This lets the agent reason about relationships between facts for better logic.

Self-Managing Memory

The end goal is letting the agent decide on its own. Agents will make smart choices about their own memory.

What to store means which observations are worth recording. Where to store it means whether it goes into episodic, procedural, or semantic memory. When to forget means which memories are old or redundant and should be removed.

This evolution will make the AI agent a complex, continuously improving, truly independent system.

Conclusion

AI agents are moving from simple chatbots to smart autonomous systems. Memory is the foundation of their intelligence.

The mix of episodic, semantic, and procedural memory lets these agents learn continuously and execute complex workflows efficiently. For organizations wanting to use memory-enabled AI agents, working with an experienced AI team is key. Digital Wit builds enterprise-grade AI agent solutions with advanced memory systems.

We help businesses unlock the full potential of autonomous AI to drive innovation and enhance customer experiences.

Frequently Asked Questions On AI Agent Memory

How do AI agents work?

They perceive their environment using sensors, process the information, reason and plan, and then act using effectors to achieve specific goals autonomously.

What are the types of AI agents?

The main types are Simple Reflex Agents, Model-based Reflex Agents, Goal-based Agents, Utility-based Agents, and Learning Agents.

How to create an AI agent?

The process generally involves defining its purpose, choosing appropriate tools/frameworks (like LLMs and Python), gathering and preparing data, designing the workflow (perception, planning, action), developing, testing, and continuously monitoring/optimizing.

What are the functions of an AI agent?

Their core functions are Perception (gathering input), Decision-Making/Reasoning (processing information to plan), and Action (executing decisions, often using external tools).

Why does AI use so much memory?

AI, especially for complex tasks and machine learning, requires vast amounts of memory (RAM/storage) to hold the enormous datasets for training, the large models themselves, and to manage the context/state for reasoning and learning over time.

What is an example of an agent in AI?

A self-driving car is a complex example, while a simpler one is a smart thermostat (a simple reflex agent).

Is Alexa an AI agent?

Alexa is generally classified as an AI assistant, it uses AI but is typically more reactive to user prompts and has less autonomy for complex, multi-step goal achievement than a fully autonomous AI agent.